The Data Fragmentation Problem

The average mid-market company uses 130+ SaaS applications. Each one stores data in its own format, its own schema, and its own API conventions. Customer information lives in the CRM, financial data in the ERP, marketing metrics in analytics platforms, and operational data in spreadsheets. When someone asks a cross-functional question like "what is the lifetime value of customers acquired through paid search?" the answer requires joining data from four systems. AI data pipelines solve this by creating automated, intelligent connections between every data source in your stack.

ETL Automation

Extract data from APIs, databases, files, and webhooks. Transform it using AI-powered mapping that learns your schema relationships. Load clean, consistent records into your data warehouse or operational systems.

Data Normalization

Standardize formats across systems: date formats, currency codes, address structures, product SKUs, and customer identifiers. AI resolves fuzzy matches when records do not align perfectly.

Cross-System Sync

Keep data consistent across Salesforce, HubSpot, QuickBooks, Shopify, and internal databases. Bi-directional sync handles conflict resolution with configurable precedence rules.

Format Conversion

Convert between JSON, XML, CSV, Parquet, Avro, and proprietary formats automatically. Schema evolution handling ensures pipelines do not break when upstream systems change their data structure.

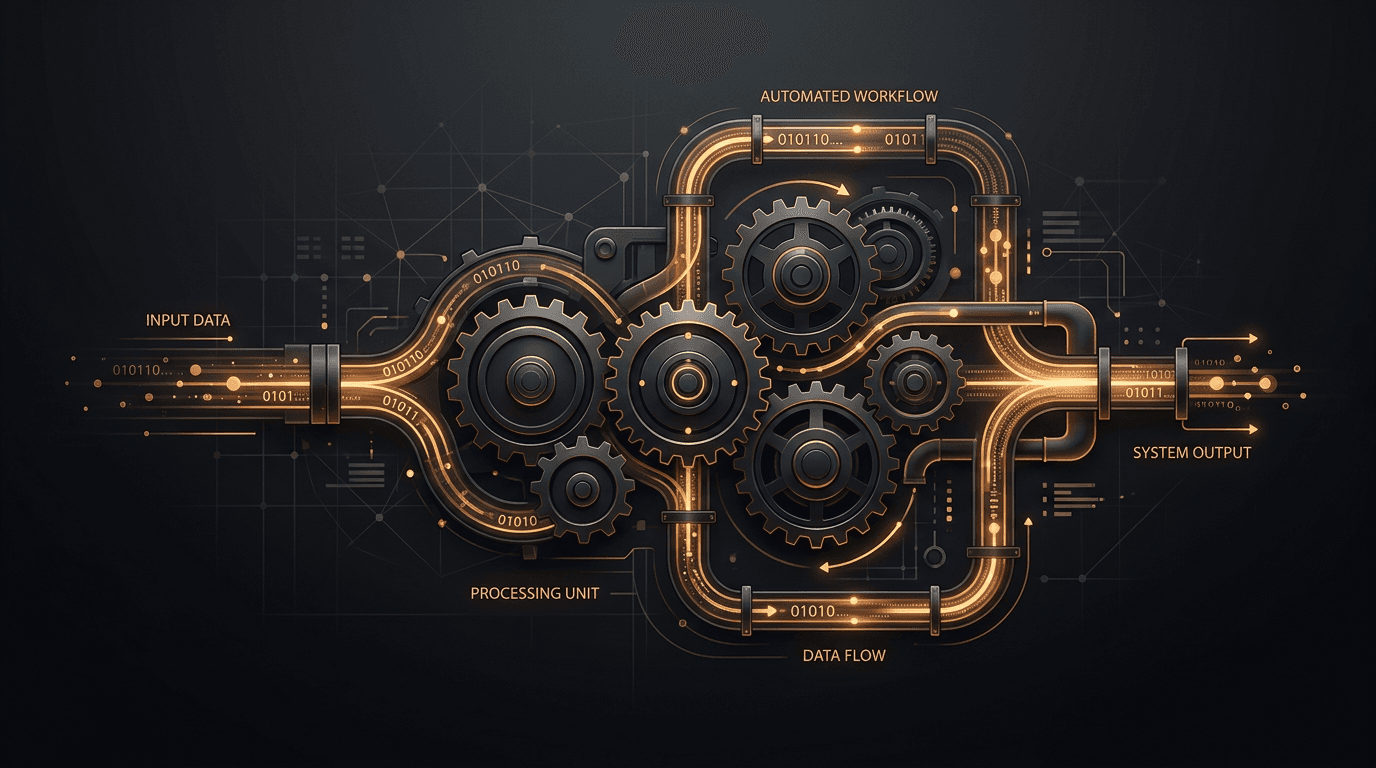

Data Pipeline Architecture

Extract

Pull from APIs, databases, files

Transform

AI maps, normalizes, deduplicates

Validate

Schema checks and quality rules

Load

Write to warehouse or target system

Extract

Pull from APIs, databases, files

Transform

AI maps, normalizes, deduplicates

Validate

Schema checks and quality rules

Load

Write to warehouse or target system

Data Pipeline Architecture

How We Build Data Pipelines

Every pipeline starts with a data audit. We catalog your systems,document the data each one produces and consumes, and identify the integration points where manual processes currently bridge the gaps. This audit typically reveals redundant data entry, conflicting records, and latency problems that cost real money.

Intelligent schema mapping. Traditional ETL requires developers to manually map fields between source and target. Our pipelines use AI to suggest mappings based on column names, data types, and sample values. A field called "cust_email" in one system maps to "contact.email" in another automatically. You review and approve the mapping once, and it applies to all future records.

Entity resolution and deduplication. When merging data from multiple systems, duplicates are inevitable. Our pipelines use probabilistic matching with configurable thresholds to identify when "John Smith at Acme Corp" in your CRM is the same person as "J. Smith, Acme Corporation" in your billing system. Match decisions are logged for audit and can be overridden manually.

Orchestration and monitoring. Pipelines run on Apache Airflow, Prefect, or Dagster depending on your infrastructure. Each run is logged with row counts, processing times, and error details. Alerting integrates with PagerDuty, Slack, or email so your team knows immediately when a pipeline needs attention.

Who This Is For

Data pipeline automation is essential for businesses running more than five interconnected software systems. E-commerce companies syncing inventory across channels, financial firms aggregating market data, healthcare organizations consolidating patient records, and SaaS companies building analytics products from operational data are the strongest fits.

If your team maintains brittle integrations, exports CSV files to move data between systems, or cannot answer cross-functional questions without days of manual work, contact us at ben@oakenai.tech to discuss building a reliable data foundation.